Understanding AI-Generated Content and Authorship

My Role

I led this research initiative end- to- end, owning both the strategic framing and hands-on execution of the study. I partnered with content, product, and design stakeholders to define the core research questions around AI authorship, trust, and transparency, ensuring the work aligned with broader content strategy and user confidence goals.

My responsibilities also included designing the experimental framework, including the three authorship disclosure variants. I moderated sessions, analyzed eye-tracking and facial expression data, and synthesized behavioral, emotional, and attitudinal findings into clear, actionable insights.

The Challenge

As Angi began experimenting with AI-generated content at scale, questions emerged around user trust, credibility, and transparency. While AI offered efficiency and coverage benefits, it was unclear how different forms of AI authorship disclosure might impact user perception, engagement, and confidence—particularly for high-intent content like cost guides.

The core challenge was to understand:

How users emotionally and behaviorally respond to AI-generated content

Where and how AI authorship should be disclosed

Whether disclosure increases transparency without eroding trust or engagement

The Process

Research Design

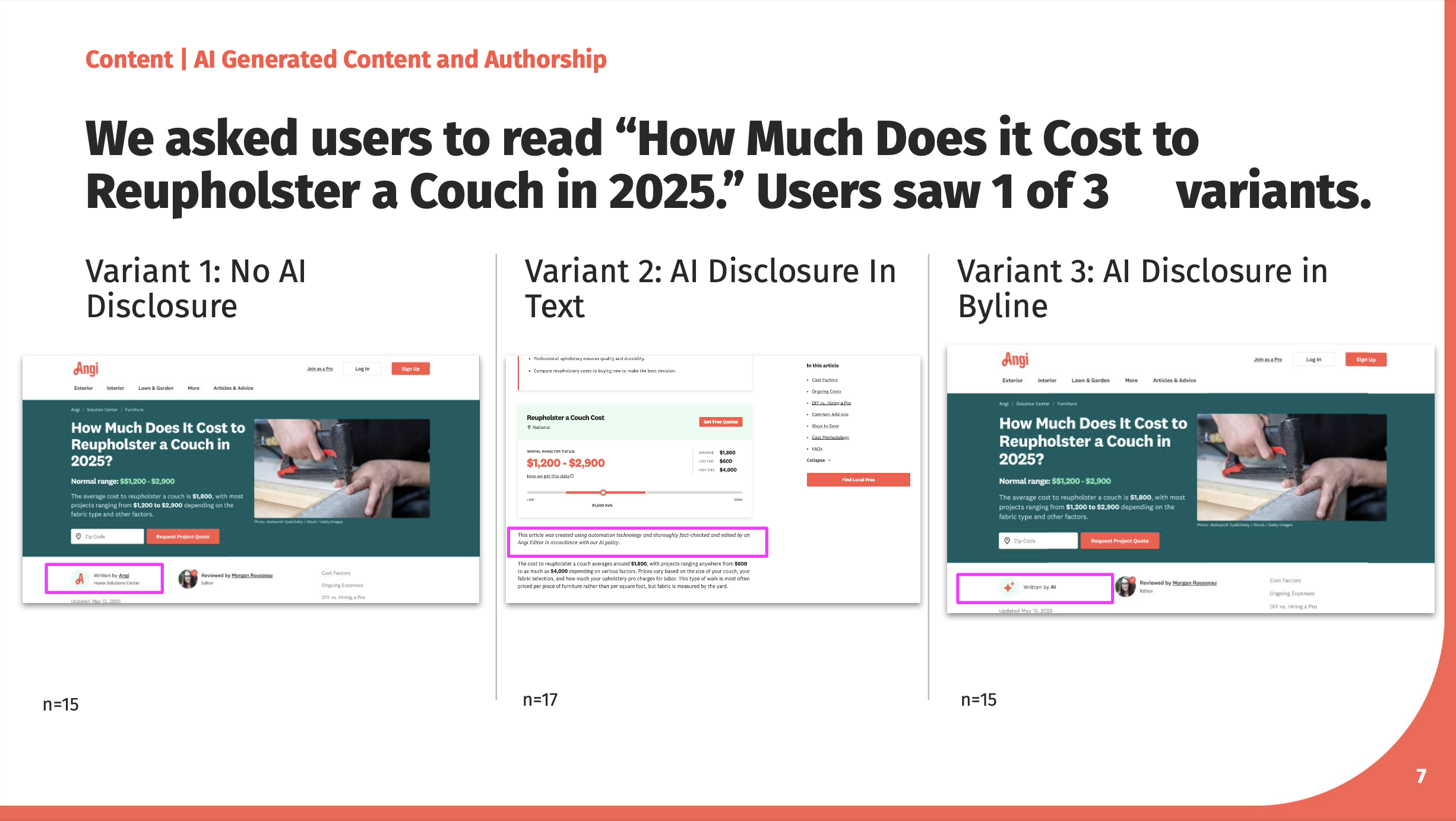

I designed a comparative study using three variants of a cost guide (“How Much Does It Cost to Reupholster a Couch?”), each with a different approach to AI disclosure:

Variant 1: No AI disclosure

Variant 2: AI disclosure presented in soft, humanized in-text language

Variant 3: AI disclosure presented in the byline as “Written by AI”

Each participant saw one variant. Participants in Variant 2 additionally took part in a biometric study using iMotions eye-tracking and facial recognition software.

Methods

Moderated usability sessions

Eye tracking (fixation, dwell time, revisits, saccades, etc.)

Facial expression analysis (confusion, surprise, frustration)

Post-task trust, credibility, and authorship comprehension questions

Analysis and Synthesis

I analyzed where users focused their attention, where they disengaged, and how emotional responses aligned with moments of disclosure, pricing interaction, and content density. Quantitative perception scores were paired with behavioral and emotional signals to create a more complete picture of trust and engagement.

Key Insights

Users consistently stopped reading after the first few paragraphs, signaling content length and repetition as primary engagement issues.

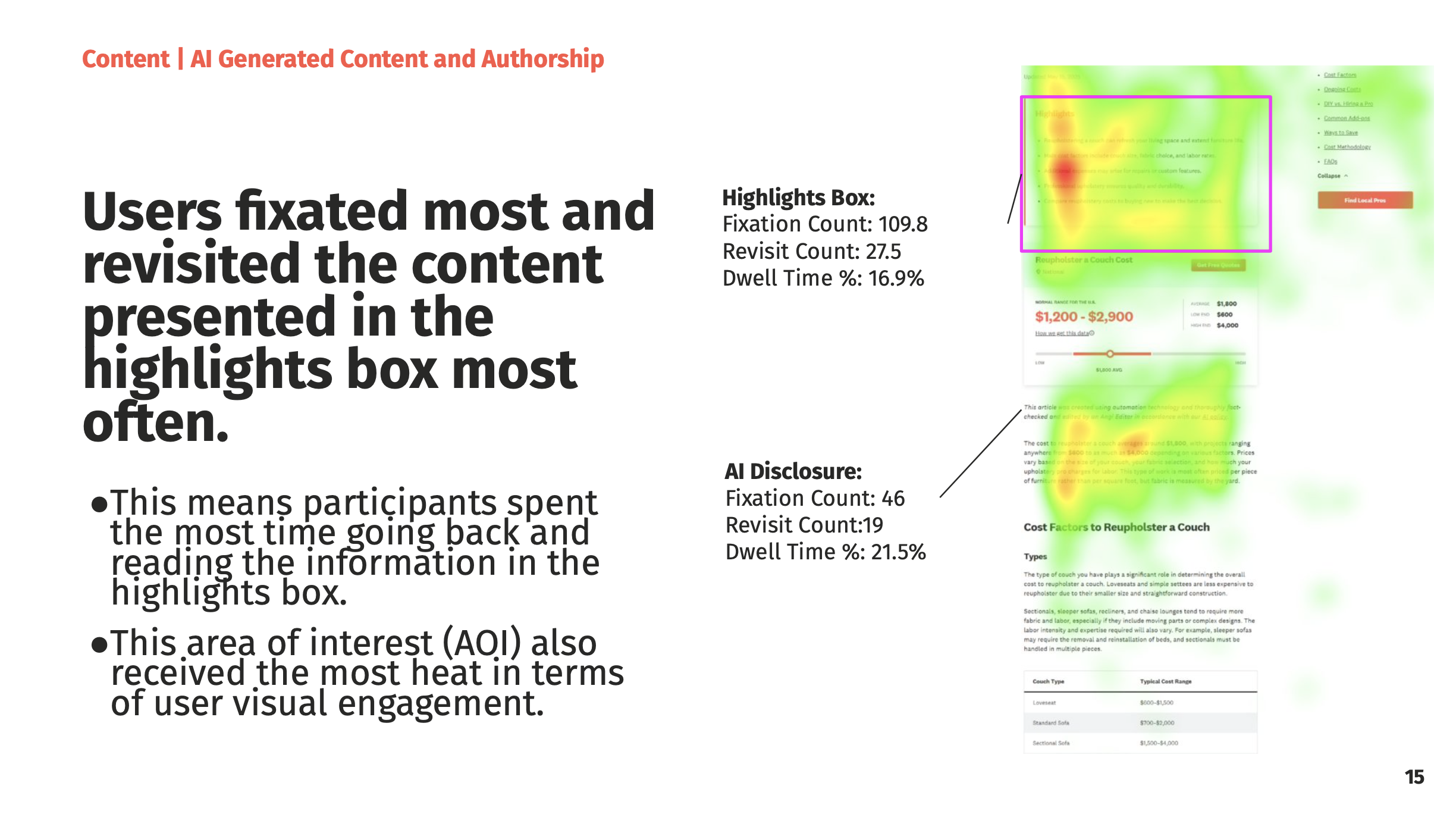

The highlights box received the most attention and revisits, reinforcing the value of scannable, summary-first content.

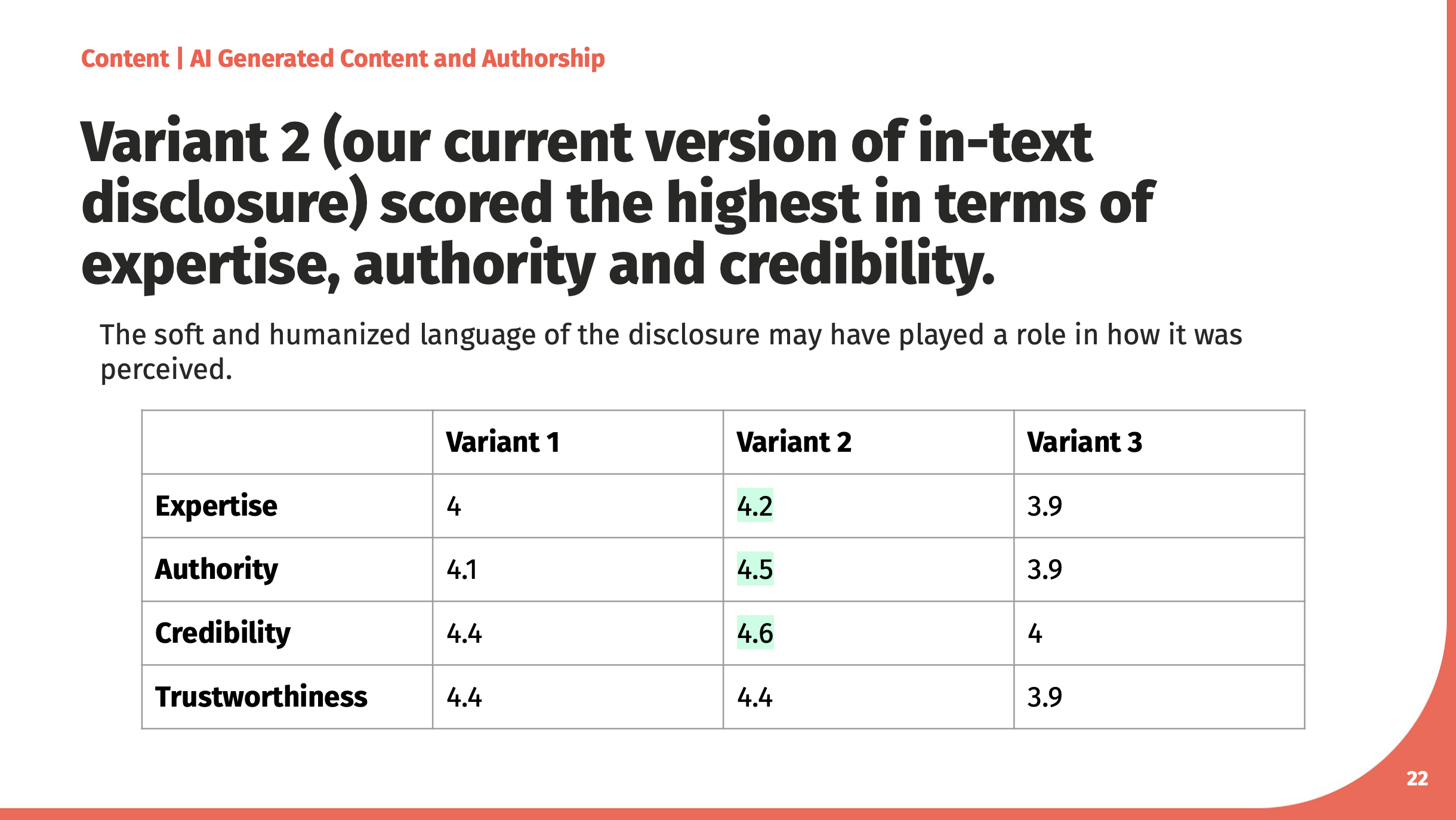

In-text AI disclosure (Variant 2) scored highest across expertise, authority, and credibility, outperforming both no disclosure and “Written by AI” byline disclosure.

Explicit “Written by AI” authorship caused users to question credibility and expertise, even when content quality was unchanged.

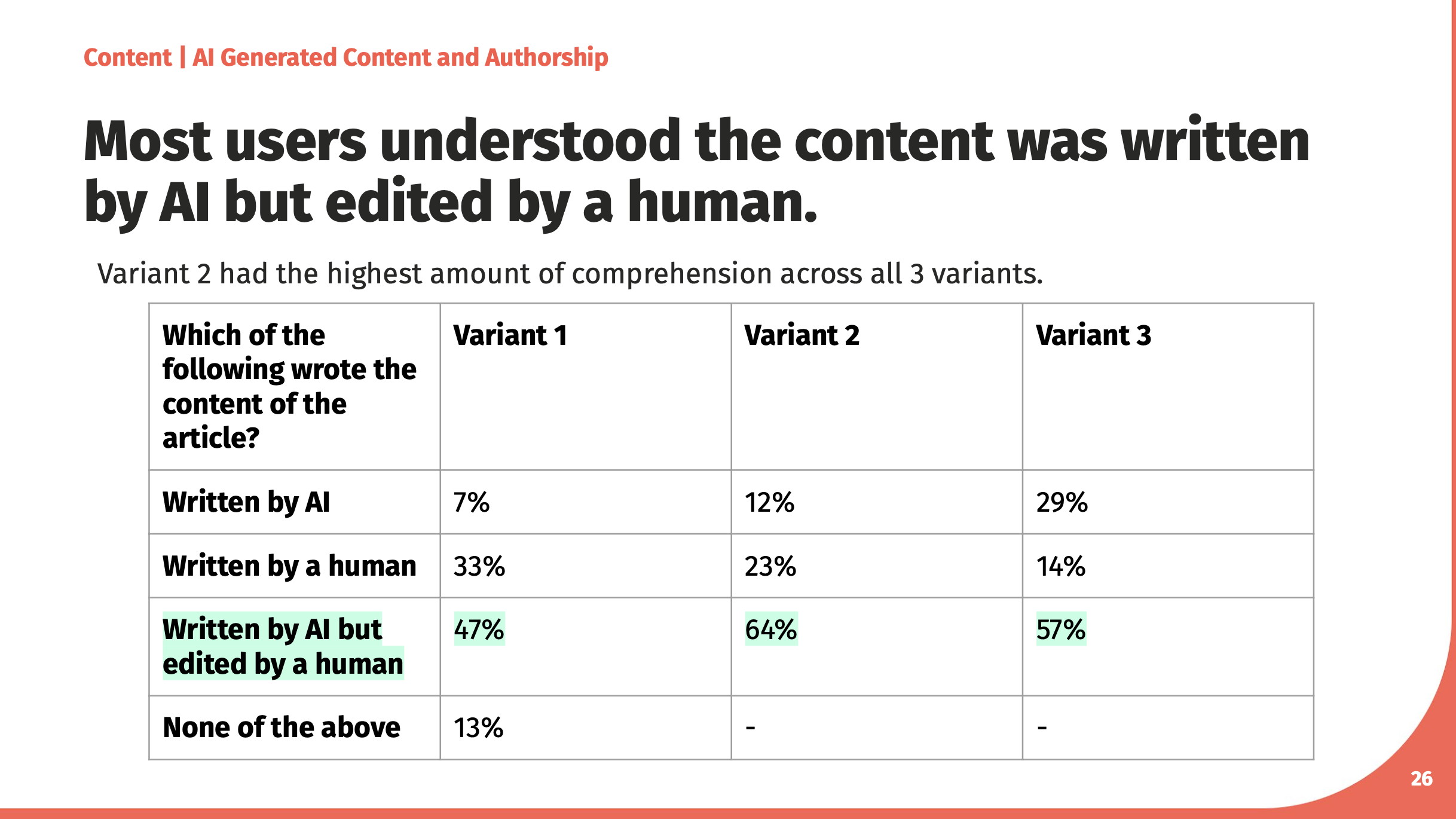

Most users were comfortable with AI-generated content when clearly edited by a human, and perceived the human layer as critical to trust.

Conclusion

This research demonstrated that transparency around AI authorship is less about whether disclosure exists and more about how it is communicated. Humanized, contextual disclosure increased trust and credibility, while blunt or overly prominent AI labeling undermined confidence—even among users generally open to AI.

The findings directly informed content strategy and authorship guidelines, helping teams balance transparency with user trust while continuing to leverage AI responsibly. More broadly, the project established a research-backed foundation for how Angi can scale AI-generated content without sacrificing credibility or user confidence.

Reflections and Next Steps

This project reinforced the importance of designing AI experiences with emotional response in mind, not just ethical intent. While disclosure is often treated as a compliance requirement, this research showed it is also a UX moment—one that can either reassure or alienate users depending on tone, placement, and context.

If this work continued, the next step would be to validate these findings through A/B testing at scale, measuring the impact of disclosure language and placement on engagement, scroll depth, and conversion. I would also expand the research beyond cost guides to other AI-assisted surfaces, ensuring disclosure patterns remain consistent, human-centered, and aligned with evolving user expectations around AI.